How AI Music Generator Reframes Music Creation Workflow

The difficulty of making music has never been about lacking ideas. It has always been about the distance between imagination and execution. Most people can describe a feeling, a scene, or even a full song in their head, yet very few can translate that into an actual track without spending years learning tools and theory. This gap is where an AI Music Generator becomes meaningful.

Instead of forcing users to adapt to software, the system adapts to how people naturally think. You describe what you want, and something tangible appears. In my testing, this does not eliminate effort entirely, but it changes where effort is applied. The challenge shifts from technical execution to clarity of intent.

That change alone makes music creation feel less like a specialized skill and more like an accessible process.

Why Traditional Music Workflows Create Friction

High Entry Barrier Before Output Exists

Conventional music production requires:

- understanding digital audio workstations

- familiarity with instruments or MIDI

- knowledge of rhythm, harmony, and structure

Before hearing anything meaningful, a user must already invest significant time.

Slow Feedback Loops Limit Exploration

Each idea requires:

- setup

- arrangement

- rendering

This slows down experimentation. Many ideas never get tested because the cost of trying them is too high.

Technical Skills Shape Creative Outcomes

In many cases:

- what gets created depends on what the user knows how to execute

- not necessarily what they want to express

This introduces a constraint that is not purely creative.

How AI Systems Change The Starting Point

Language Becomes The Primary Interface

Instead of using tools, users rely on descriptions such as mood, style, instrumentation, and Lyrics to Music AI. This aligns more closely with how people think about music.

Immediate Output Reduces Uncertainty

Instead of imagining:

- users can generate and listen immediately

- ideas become testable in seconds

This creates a faster feedback loop.

Iteration Replaces Construction

Rather than building step by step:

- users generate multiple versions

- evaluate and refine

The process becomes cyclical rather than linear.

What Actually Happens Behind The Scenes

Step One Interpreting Text Into Musical Parameters

When a prompt is entered, the system translates:

- descriptive words into tempo, key, and style

- emotional cues into harmonic tendencies

This is where intent is first converted into structure.

Step Two Generating Musical Components

The system constructs:

- chord progressions

- melodies

- rhythmic patterns

These are assembled based on learned patterns from large datasets.

Step Three Rendering Final Audio Output

All components are combined into:

- a complete audio track

- with optional vocals

- ready for playback or download

The result is a finished piece rather than a fragment.

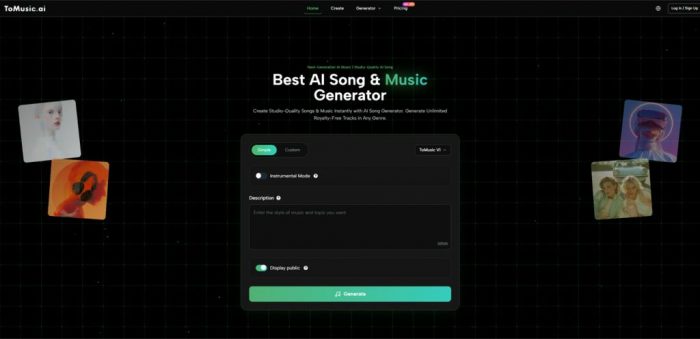

How To Use The Platform In Practice

Step One Enter Prompt Or Lyrics Input

Users can:

- describe a sound or feeling

- or input structured lyrics

The input defines the direction of the output.

Step Two Select Style And Basic Preferences

Available options typically include:

- genre

- mood

- vocal inclusion

These help guide the generation process.

Step Three Generate And Compare Results

The system produces multiple variations:

- outputs differ even with the same input

- selecting the best version is part of the process

Iteration improves alignment with intent.

Comparing AI-Based And Traditional Workflows

| Aspect | Traditional Production | AI-Based Generation |

| Learning Curve | Steep | Minimal |

| Time To First Output | Long | Short |

| Creative Control | Direct and precise | Indirect through prompts |

| Iteration Cost | High | Low |

| Output Variety | Limited by effort | Naturally diverse |

This comparison shows a redistribution of effort rather than a replacement.

Where This Approach Is Most Effective

Content Creation With Time Constraints

For creators working on:

- short videos

- social media content

- repeated formats

speed and flexibility are valuable.

Early Stage Idea Exploration

Instead of committing early:

- users can test multiple directions

- refine based on actual output

This reduces uncertainty.

Non-Technical Creative Workflows

People without production skills can:

- express ideas naturally

- rely on the system for execution

This broadens participation in music creation.

Observed Strengths In Real Use

Fast Translation From Idea To Output

In my experience:

- initial results appear quickly

- ideas become tangible almost instantly

This changes how often users experiment.

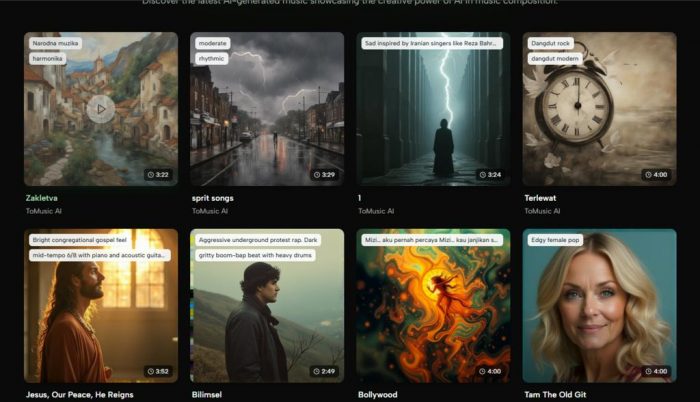

High Variation Encourages Exploration

Each generation produces:

- slightly different interpretations

- unexpected results that can inspire new ideas

Reduced Dependence On Technical Knowledge

Users focus on:

- describing intent

- evaluating outcomes

rather than operating tools.

Limitations That Should Be Acknowledged

Prompt Sensitivity Affects Consistency

Small changes in wording can lead to:

- significantly different outputs

- difficulty in reproducing results

Limited Fine-Grained Control

Users cannot always specify:

- exact arrangement details

- precise timing or instrumentation adjustments

Iteration Remains Necessary

In practice:

- the first result is rarely final

- multiple attempts improve quality

This introduces a different type of effort.

How Creative Roles Are Quietly Changing

Instead of building every element manually, users:

- define intent

- review generated outputs

- select the most suitable version

This shifts creativity toward decision-making and curation.

What This Suggests About Future Creative Tools

The pattern seen here is part of a broader shift:

- from tool-based interaction

- to intent-based interaction

Music is only one example. Similar changes are appearing in:

- image generation

- video creation

- text production

A Practical Way To Understand The System

It may be useful to think of this type of platform as:

- a translation layer

- between human ideas and digital output

rather than a replacement for traditional production tools.

Why This Matters For Creative Workflows

Reducing the gap between idea and execution changes how often ideas are explored. When the cost of trying something is low:

- experimentation increases

- creative blocks decrease

- iteration becomes natural

The system does not remove complexity entirely, but it moves it away from the user’s immediate experience.